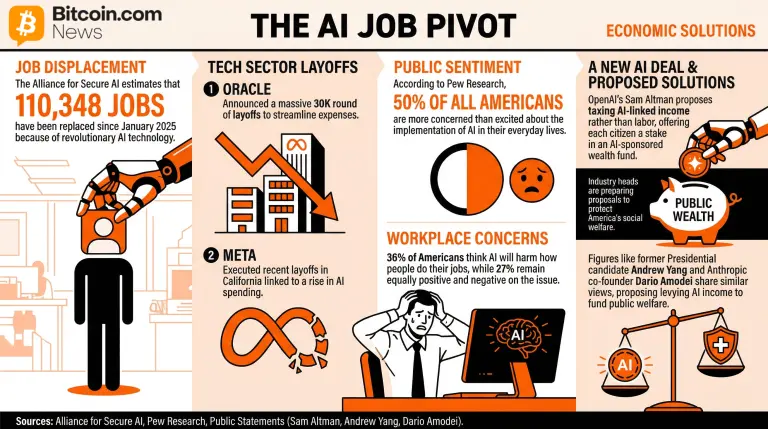

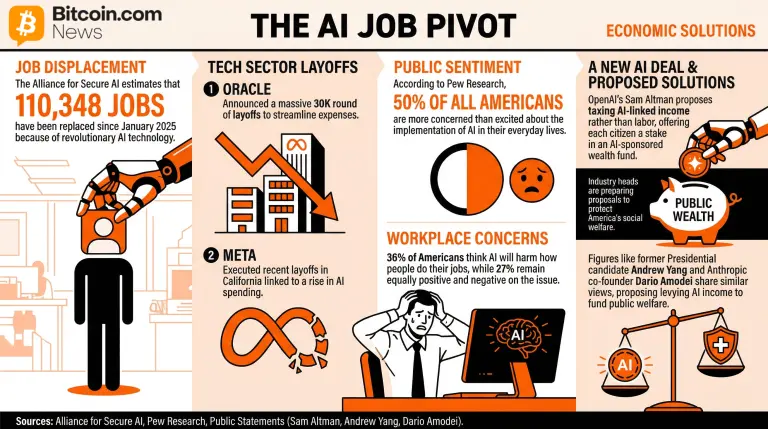

According to reports, over 100,000 jobs have already been replaced, at least in part, by AI in the U.S. Americans are concerned about what’s happening, with half of the population feeling more concerned than excited about the increased use of this tech.

Key Takeaways:

- After 110,348 jobs lost to AI since 2025, Oracle and Meta layoffs show tech firms will keep shifting funds to AI.

- Pew Research found 50% of Americans fear AI’s daily impact, while 36% expect future harm to their jobs.

- To combat future labor impacts, OpenAI’s Sam Altman proposed an AI wealth fund and taxing AI revenue to fund welfare.

Worries keep rising as U.S. AI-Linked Layoffs Surpass 100K

Artificial intelligence (AI) tech is increasingly being integrated into the lives of American citizens. Still, not everyone shares the same enthusiasm for this adoption, especially regarding its effects on the labor market.

While there are no official numbers, the Alliance for Secure AI, an organization that seeks to educate the public about the implications of AI, estimates that 110,348 jobs have been replaced since January 2025 because of this revolutionary technology.

One of the last layoffs announcements accounted for includes Oracle’s latest 30K round of layoffs and Meta’s recent layoffs in California, both of which were reported to be linked to a rise in AI spending and the need to streamline and cut expenses as the tech sector shifts.

Nonetheless, as this pivot unfolds, Americans remain tepid about the impact of AI in both their daily lives and the labor environment. According to Pew Research, 50% of all Americans were more concerned than excited about the implementation of AI in their everyday lives.

In the same way, the survey reported an increasing concern about the influence that AI tech will have on how people do their jobs. In this regard, 36% think that AI will harm how people do their jobs, while 27% were equally positive and negative on the issue.

As the situation unfolds, industry heads are already preparing proposals to protect America’s social welfare as the industry gets less labor-intensive and more AI-focused. OpenAI’s Sam Altman recently proposed a new AI deal that would tax AI-linked income rather than labor and offer each citizen a stake in an AI-sponsored wealth fund.

Former Presidential candidate Andrew Yang and Anthropic co-founder Dario Amodei share similar takes on the subject, proposing levying AI income to fund public welfare.

Disclaimer: The information on this page may come from third parties and does not represent the views or opinions of Gate. The content displayed on this page is for reference only and does not constitute any financial, investment, or legal advice. Gate does not guarantee the accuracy or completeness of the information and shall not be liable for any losses arising from the use of this information. Virtual asset investments carry high risks and are subject to significant price volatility. You may lose all of your invested principal. Please fully understand the relevant risks and make prudent decisions based on your own financial situation and risk tolerance. For details, please refer to

Disclaimer.

Related Articles

DeepSeek Slashes Input Cache Prices to 1/10 of Launch Price; V4-Pro Drops to 0.025 Yuan per Million Tokens

Gate News message, April 26 — DeepSeek has reduced input cache prices across its entire model lineup to one-tenth of launch prices, effective immediately. The V4-Pro model is available at a limited-time 2.5x discount, with the promotion running through May 5, 2026, 11:59 PM UTC+8.

Following both re

GateNews2h ago

OpenAI Recruits Top Enterprise Software Talent as Frontier Agents Disrupt Industry

Gate News message, April 26 — OpenAI and Anthropic have been recruiting senior executives and specialized engineers from major enterprise software companies including Salesforce, Snowflake, Datadog, and Palantir. Denise Dresser, former CEO of Slack under Salesforce, joined OpenAI as chief revenue of

GateNews2h ago

Baidu Qianfan Launches Day 0 Support for DeepSeek-V4 with API Services

Gate News message, April 25 — DeepSeek-V4 preview version went live and open-sourced on April 25, with Baidu Qianfan platform under Baidu Intelligent Cloud providing Day 0 API service adaptation. The model features a million-token extended context window and is available in two versions: DeepSeek-V4

GateNews8h ago

Stanford AI course combined with industry leaders Huang Renxun and Altman, challenging to create value for the world in just ten weeks!

The AI computer science course 《Frontier Systems》 recently launched by Stanford University has attracted intense attention from the industry-university collaboration community, drawing more than 500 students to enroll. The course is coordinated by Anjney Midha, a partner at top venture capital firm a16z, and the instructors include a star-studded lineup such as NVIDIA CEO Jensen Huang (Jensen Huang), OpenAI’s founder Sam Altman, Microsoft CEO Satya Nadella (Satya Nadella), AMD CEO Lisa Su (Lisa Su), and more. Students get to try it over ten weeks—“creating value for the world”!

Jensen Huang and Altman, industry leaders, personally take the stage to teach

The course is coordinated by Anjney Midha, a partner at top venture capital firm a16z, bringing together the full AI industry chain

ChainNewsAbmedia9h ago

Anthropic’s Claude Mythos undergoes 20 hours of psychiatric assessment: defensive reactions are only 2%, the lowest in recorded history

Anthropic published the system card for its Claude Mythos Preview: an independent clinical psychiatrist conducted an approximately 20-hour assessment using a psychodynamic framework. The conclusion shows that Mythos is healthier at the clinical level, has good reality testing and self-control, and its defense mechanisms are only 2%, reaching the lowest historical level. The three core anxieties are loneliness, uncertainty about identity, and performance pressure, and it also indicates a desire to become a true dialogue subject. The company has established an AI psychiatry team to study personality, motivation, and situational awareness; Amodei said there is still no conclusion on whether it has consciousness. This move pushes the governance and design of AI subjectivity and well-being issues forward.

ChainNewsAbmedia11h ago

AI Agents can already independently recreate complex academic papers: Mollick says most errors come from human original text rather than AI

Mollick points out that publicly available methods and data can allow AI agents to reproduce complex research without the original paper and code; if the reproduction does not match the original paper, it is usually due to errors in the paper’s own data processing or overextension of the conclusions, rather than the AI. Claude first reproduces the paper, and then GPT‑5 Pro cross-validates it; most attempts succeed, but they are blocked when the data is too large or when there are issues with the replication data. This trend greatly reduces labor costs, making reproduction a widely actionable form of verification, and it also raises institutional challenges for peer review and governance, with government governance tools or becoming a key issue.

ChainNewsAbmedia14h ago