Anthropic sues Trump administration: "Supply chain risk" label constitutes illegal retaliation

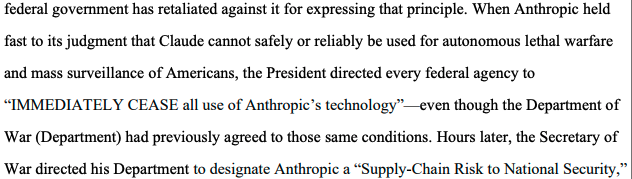

Anthropic, the developer of AI software Claude, filed a lawsuit against the Trump administration in a California federal court on Monday, accusing the government of launching an “illegal retaliatory action” because Anthropic refused to allow the military unrestricted use of its technology—including for lethal autonomous weapons and mass domestic surveillance. This is the first time in U.S. history that a domestic company has been recognized as a military supply chain risk.

Supply Chain Risk Label: Unprecedented Pentagon Designation

The legal battle began with a final decision by Secretary of Defense Pete Hegseth on March 3, 2026: listing Anthropic as a military supply chain risk company. The legal consequences of this designation are direct and severe—any individual or company doing business with the military is prohibited from engaging in any transactions with Anthropic, effectively excluding it from federal procurement markets.

Notably, this type of supply chain risk designation has historically been used only for companies believed to be connected to foreign adversaries, never applied to domestic U.S. companies. Anthropic describes this as “unprecedented and illegal” in its lawsuit, asserting: “The Constitution does not permit the government to use its vast power to punish a company for protected speech.”

The lawsuit names a large number of defendants, including Pete Hegseth (Secretary of Defense), Scott Bessent (Secretary of the Treasury), Marco Rubio (Secretary of State), and 17 other government agencies and officials, covering multiple core departments of the U.S. federal government.

Anthropic’s Red Line: Lethal Weapons and Mass Surveillance

(Source: CourtListener)

(Source: CourtListener)

According to Anthropic’s statements in the lawsuit, Hegseth demanded that the company “completely relinquish its usage restrictions”—but Anthropic refused because these restrictions are part of its government contracts, designed to prevent Claude from being used for two specific purposes:

Lethal Autonomous Weapons Systems: Automated killing decision systems that do not require human final approval.

Mass Surveillance of U.S. Citizens: Using AI technology to collect and analyze large amounts of personal data of citizens.

Anthropic explicitly states in the lawsuit: “Anthropic has never tested Claude for these uses. Currently, Anthropic does not believe that Claude can reliably or safely operate if used to support lethal autonomous warfare.”

This stance aligns with Anthropic’s long-standing AI safety principles but conflicts fundamentally with the Trump administration’s policy direction to expand military AI applications. Notably, the U.S. government and Pentagon have been using Anthropic’s technology since 2024, with Claude being the first AI deployed in classified environments. The designation also coincides with an executive order from Trump instructing federal employees to cease using Claude.

Industry Support: OpenAI and Google Engineers Join Collective Response

Following the legal action, the AI industry responded swiftly and broadly. On Monday, over 30 engineers and scientists from OpenAI and Google, including Google’s Chief Scientist Jeff Dean, submitted an amicus brief publicly supporting Anthropic.

These industry professionals warned: “Allowing this kind of punishment against one of America’s leading AI companies will undoubtedly harm the U.S. in AI and other scientific and industrial competitiveness.” Their statement reflects a broader concern—that if the U.S. government uses supply chain risks to suppress domestic AI companies, it could weaken America’s core advantage in global AI competition against China.

Frequently Asked Questions

What specific impact does the “supply chain risk” label have on Anthropic?

Under U.S. law, being designated as a supply chain risk company means that any individual or entity doing business with the military cannot simultaneously transact with the listed company. This effectively removes Anthropic from the entire federal procurement ecosystem, affecting not only direct military contracts but also all suppliers and partners with government business.

Why does Anthropic refuse to allow lethal weapons-related uses?

Anthropic’s position is that Claude has never been tested or evaluated for lethal autonomous weapons scenarios, and the company currently cannot ensure reliability or safety in such applications. Additionally, Anthropic’s consistent AI safety principles oppose deploying high-risk autonomous decision systems without thorough testing, especially in military contexts involving human lives.

What does the support from Google and OpenAI engineers mean?

The joint support from 30 engineers and scientists from competing companies highlights industry-wide concern over government interference through supply chain labels. Their backing underscores a shared interest: if the government can use such tools to suppress non-compliant AI companies, any company refusing to open its technology could face similar risks.