Will AI Cause a Global Economic Collapse in 2028? A Comprehensive Analysis of the “2028 Global Intelligence Crisis” Report and the Realities of AI Doomsday Predictions

What Is the "2028 Global Intelligence Crisis" Report Really About?

The market has recently focused on a report co-authored by Citrini Research founder James van Geelen and Alap Shah—"The 2028 Global Intelligence Crisis." Framed from a June 2028 “future perspective,” the report looks back on recent years and outlines a scenario in which AI rapidly replaces a significant number of white-collar jobs. This leads to a collapse in consumer demand, shrinking corporate profits, plunging asset prices, and ultimately a global systemic economic crisis.

The authors stress that this is a “scenario analysis,” not a prediction. Yet, the report’s dramatic narrative, combined with current rapid AI advances, has fueled the spread of this “AI doomsday” thesis—sparking investor concerns over tech stocks and employment prospects.

Some economists see it as a “stress test” thought experiment, arguing that its assumptions on replacement speed and policy lag are extreme, and that it overstates the probability of systemic collapse. Some investors view it as a warning about the disruptive power of AI productivity. The report did trigger volatility in tech stocks, but many traders attribute this to sentiment rather than fundamentals. Overall, while the mainstream rejects the “AI doomsday” scenario, it acknowledges that if AI outpaces society’s ability to adapt, structural shocks are likely.

This leads to a core question: Could this scenario really play out in 2028?

Why Does the "AI Doomsday" Narrative Spark Market Panic?

The “AI doomsday” thesis resonates because it taps into three current anxieties:

- AI is replacing high-income knowledge work

- Companies are deploying automation tools at scale

- Productivity gains may suppress labor demand

Unlike past waves of industrial automation, this round of AI primarily disrupts cognitive roles—such as analysis, writing, programming, customer service, and financial research. It directly challenges the job security of the middle class, not just blue-collar roles.

When expectations for jobs and income are shaken, capital markets naturally react ahead of the curve.

But there is often a lag—and a difference in magnitude—between sentiment and reality.

AI Maturity: Could 2028 Really Bring Global Economic Disruption?

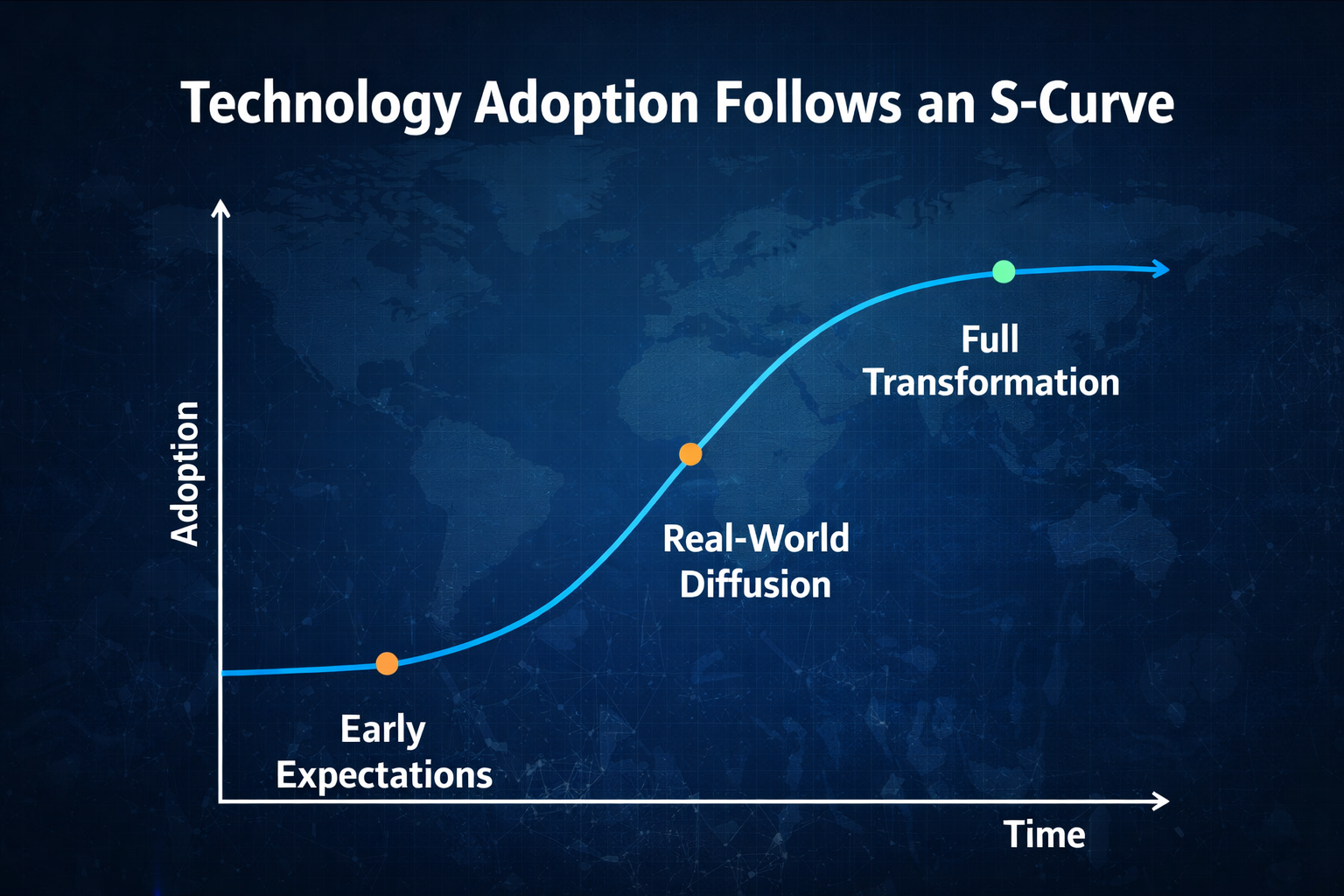

Assessing the risk of systemic collapse starts with the pace of technology diffusion.

Historically, technology adoption follows an S-curve:

- High expectations in the early phase

- Implementation and adjustment in the mid-phase

- Large-scale rollout in the late phase

Even if AI capabilities keep advancing, companies must still:

- Restructure IT systems

- Govern data

- Conduct compliance reviews

- Redesign organizational processes

All of this takes time. 2028 is not far away. From a macro perspective, the odds of global firms achieving full workforce replacement by then are low. What’s more likely is “localized high efficiency and gradual substitution.”

Technology may advance rapidly, but economic structural change is a slow-moving variable.

Will a White-Collar Unemployment Wave Materialize?

The report’s core chain is:

AI substitution → mass white-collar unemployment → consumption collapse → credit crisis → financial system turmoil

In reality, corporate adjustments are usually incremental:

- Hiring freezes

- Natural attrition

- Department consolidation

- Targeted layoffs

It is rarely a one-off, total replacement of all jobs.

Moreover, new technologies typically create new roles:

- AI management and optimization

- Data governance

- Algorithm security

- Human-machine collaboration design

The real risk is “mid-level skill compression,” not mass unemployment.

So, by 2028, we are more likely to see employment structure polarization—not a complete collapse.

Could AI Trigger a Systemic Financial Crisis?

Systemic financial crises generally require two ingredients:

- High leverage

- Chain reaction of balance sheet failures

The 2008 crisis was an internal collapse of the credit system; the 2020 pandemic was an external shock. The AI shock is more likely to be a “profit structure reshaping event” than a direct impairment of bank assets.

Additionally, today’s macro system includes:

- Automatic stabilizers (unemployment insurance)

- Central bank rapid rate-cutting tools

- Fiscal stimulus capacity

This means that even if employment pressures rise, policymakers can intervene swiftly. The odds that AI will instantly collapse the global credit system are low.

What’s the More Likely 2028 Scenario?

Given the patterns of technology adoption and macroeconomic transmission, the most probable 2028 scenario is not “global systemic economic collapse,” but a gradual and profound structural transformation.

1.Tech sector profit margins may rise temporarily. Broad AI adoption will sharply reduce marginal costs—especially in software development, customer service, data analysis, and content creation. Leading firms with advantages in data, compute, and models will further reinforce scale and network effects, concentrating profits at the top. This “efficiency dividend” may temporarily boost overall tech sector profitability.

2.Some white-collar roles will shrink, but not disappear. Functional restructuring is more likely than wholesale replacement. Repetitive, process-oriented, and standardized knowledge work will be hit first, while complex decision-making, interpersonal, and creative roles retain value. The job market will see a clear skill stratification—those who master AI collaboration will see incomes rise, while those who do not will face pressure.

3.Income inequality will widen as a real risk. AI’s productivity dividend may flow first to capital, tech platforms, and high-skill talent, while mid-level knowledge workers lose bargaining power. This uneven distribution could trigger changes in consumption, social psychology, or even policy redistribution pressure.

4.Market volatility will rise sharply. When productivity expectations are repriced quickly, capital markets often see dramatic valuation cycles. AI concept stocks may soar on high expectations, but if profits lag, volatility will spike.

5.Capital will likely concentrate further in AI infrastructure. Compute, chips, data centers, energy, and cloud platforms will be long-term beneficiaries. Compared to the application layer, foundational resources are more irreplaceable and command greater pricing power—driving capital spending toward “compute and energy.”

The most probable outcome of AI is a “structural shock,” not “systemic destruction.” The economic system will not collapse, but resource allocation will change fundamentally.

Risks will center on:

- Asset bubbles

- Overvaluation

- Leverage

If there is a crisis, it will most likely be the bursting of the AI narrative bubble—not AI itself destroying the economy.

How Should Investors Approach AI Risk and Opportunity?

From an investment standpoint, three risk categories matter.

When investing in AI, the question isn’t whether you “believe in AI”—it’s where the risks lie. They fall into three types:

1. Technology risk: Model improvements may slow, compute costs may rise, or tighter regulation could limit adoption. If progress lags market expectations, high valuations will be vulnerable to correction.

2. Narrative risk: Markets often price in a decade of productivity gains in advance. If profits don’t materialize as quickly as expected, valuations can compress rapidly. Most technological revolutions have cycled through “narrative overheat—profit validation—valuation mean reversion.”

3. Structural risk: If AI compresses mid-level roles in the short term and income shifts to capital, consumer demand may weaken—impacting growth in some sectors.

Long term, AI likely boosts productivity, but short-term volatility is inevitable. Rational strategies include:

Diversifying to avoid concentration risk in a single sector

Focusing on cash flow quality and choosing companies with proven profitability

Avoiding high leverage to limit losses in volatile periods

Monitoring policy shifts, as regulation and fiscal changes can alter industry trends

The real risk isn’t the technology itself—it’s how the market values it.

Conclusion: Will AI Cause a Global Economic Meltdown in 2028?

Weighing the pace of technology adoption, corporate transformation cycles, macro policy capacity, and financial system stability, the probability of a global systemic collapse is low. However, the risk of structural employment shocks and heightened market volatility is substantial. “The 2028 Global Intelligence Crisis” is best viewed as a macro stress test—a call to focus on the gap between AI’s speed of substitution and society’s ability to adapt.

AI is not a doomsday machine—it’s an amplifier. It magnifies efficiency, but also amplifies imbalances. What will truly shape 2028 is not just technological capability, but policy response, social adaptability, and the rationality of capital markets.

Rationality matters more than panic.

Related Articles

What is Fartcoin? All You Need to Know About FARTCOIN

Gold Price Forecast for the Next Five Years: 2026–2030 Trend Outlook and Investment Implications, Could It Reach $6,000?

XRP Surge, A Review of 9 Projects with Related Ecosystems

Pump.fun Launches Its Own AMM Pool? The Intent to Take Raydium’s Profits is Obvious

Every U.S. Crypto ETF You Need to Know About in 2025