The 72 Hours of Anthropic’s Identity Crisis

Tuesday, February 24. Washington, Pentagon.

Anthropic CEO Dario Amodei sat across from Secretary of Defense Pete Hegseth. Sources cited by NPR and CNN described the meeting as “polite,” though the substance was anything but gentle.

Hegseth delivered an ultimatum: by 5:01 p.m. Friday, remove all military use restrictions on Claude and allow the Pentagon to deploy it for “all lawful purposes,” including autonomous weapons targeting and large-scale domestic surveillance.

If not, the $200 million contract would be canceled. The Defense Production Act would be invoked for compulsory requisition. Anthropic would be designated a “supply chain risk”—effectively blacklisting it alongside adversaries like Russia and China.

That same day, Anthropic released the third edition of its Responsible Scaling Policy (RSP 3.0), quietly eliminating its most fundamental pledge since inception: not to train more powerful models unless safety measures are in place.

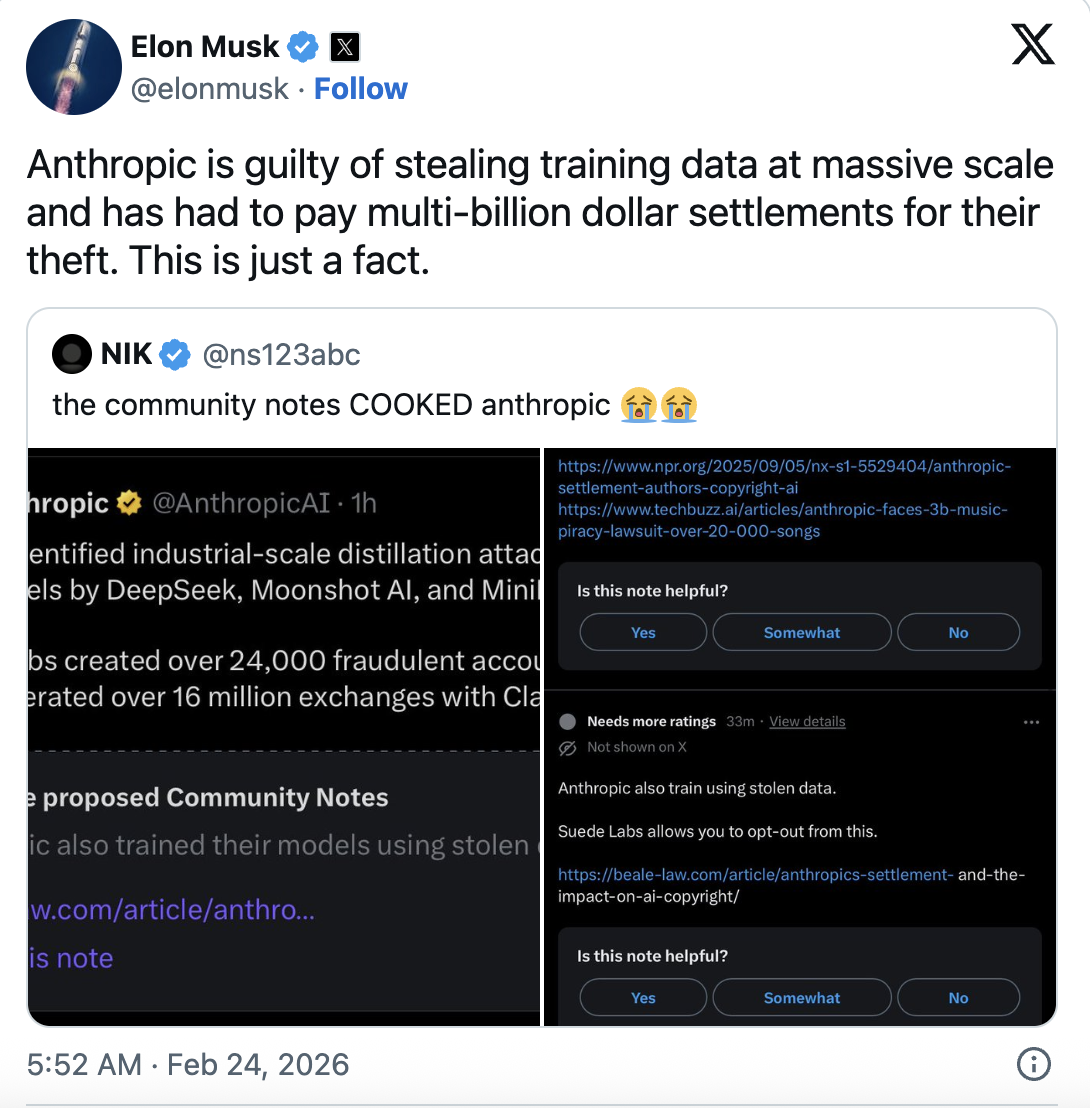

Also that day, Elon Musk posted on X: “Anthropic engaged in large-scale theft of training data—this is a fact.” At the same time, X’s Community Notes referenced reports that Anthropic paid $1.5 billion to settle claims over training Claude with pirated books.

Within seventy-two hours, this AI company—once claiming to have a “soul”—was cast as three things: a safety martyr, an intellectual property thief, and a Pentagon turncoat.

Which is the real Anthropic?

Maybe all of them.

Pentagon’s “Comply or Get Out” Mandate

The first layer of the story is straightforward.

Anthropic was the first AI company to secure classified access from the US Department of Defense, with a contract awarded last summer and capped at $200 million. OpenAI, Google, and xAI subsequently landed contracts of similar scale.

According to Al Jazeera, Claude was used in a US military operation this January. The report stated that the mission involved the abduction of Venezuelan President Maduro.

But Anthropic drew two red lines: no support for fully autonomous weapons targeting and no support for large-scale surveillance of US citizens. Anthropic maintains that AI isn’t reliable enough to control weapons, and there are no laws regulating AI in mass surveillance.

The Pentagon wasn’t buying it.

Last October, White House AI advisor David Sacks publicly accused Anthropic on X of “weaponizing fear to achieve regulatory capture.”

Competitors have already capitulated. OpenAI, Google, and xAI all agreed to let the military use their AI for “all lawful scenarios.” Musk’s Grok was just approved for classified systems this week.

Anthropic is the last holdout.

As of this writing, Anthropic stated in its latest announcement that it has no intention of yielding. But the Friday 5:01 p.m. deadline is looming.

An anonymous former liaison between the Justice Department and the Pentagon told CNN: “How can you declare a company a ‘supply chain risk’ and simultaneously force it to work for your military?”

It’s a good question—but not one the Pentagon cares about. Their concern is that if Anthropic won’t compromise, they’ll enforce compliance or leave Anthropic out in the cold in Washington.

“Distillation Attack”: A Public Accusation Backfires

On February 23, Anthropic published a sharply worded blog post accusing three Chinese AI companies—DeepSeek, Moonshot AI, and MiniMax—of conducting “industrial-scale distillation attacks” on Claude.

Anthropic alleged these companies used 24,000 fake accounts to initiate over 16 million interactions with Claude, systematically extracting its core capabilities in agent reasoning, tool use, and programming.

Anthropic framed this as a national security threat, claiming that distilled models are “unlikely to retain safety guardrails” and could be used by authoritarian governments for cyberattacks, disinformation, and mass surveillance.

The narrative was well-timed and well-crafted.

It came right after the Trump administration relaxed chip export controls to China—just as Anthropic needed new ammunition for its lobbying on chip exports.

But Musk fired back: “Anthropic engaged in large-scale theft of training data and paid billions in settlements. This is fact.”

IO.Net co-founder Tory Green commented, “You train your models on the entire internet, and when others learn from your public API, you call it a ‘distillation attack’?”

Anthropic calls distillation an “attack,” but it’s standard practice in the AI industry. OpenAI used it to compress GPT-4, Google used it to optimize Gemini, and Anthropic itself has done it. The only difference this time: Anthropic is the target.

As Nanyang Technological University AI professor Erik Cambria told CNBC, “The line between legitimate use and malicious exploitation is often blurred.”

Even more ironic, Anthropic just paid $1.5 billion to settle claims for training Claude on pirated books. They trained on the entire internet, then accused others of learning from their public API. That’s not just double standards—it’s triple standards.

Anthropic set out to play the victim but ended up as the accused.

Dismantling Safety Commitments: RSP 3.0

On the same day it confronted the Pentagon and sparred with Silicon Valley, Anthropic released the third version of its Responsible Scaling Policy.

Chief Scientist Jared Kaplan told the media, “We don’t think halting AI model training helps anyone. With AI developing so rapidly, making unilateral commitments while competitors push ahead at full speed makes no sense.”

In other words, if others won’t play by the rules, neither will we.

The core of RSP 1.0 and 2.0 was a hard commitment: if model capabilities exceeded the coverage of safety measures, training would pause. That pledge gave Anthropic a unique reputation in the AI safety community.

But version 3.0 dropped that promise.

It’s been replaced by a more “flexible” framework—splitting safety measures Anthropic can implement from industry-wide recommendations. Every 3–6 months, a risk report will be published, with external expert review.

Does that sound responsible?

Chris Painter, an independent reviewer from the nonprofit METR, said after seeing an early draft, “This shows Anthropic believes it needs to enter ‘triage mode’ because risk assessment and mitigation can’t keep up with capability growth. It’s more evidence that society isn’t ready for AI’s catastrophic risks.”

TIME reported that Anthropic spent nearly a year internally debating this rewrite, with CEO Amodei and the board giving unanimous approval. Officially, the original policy was meant to drive industry consensus—but the industry didn’t follow. The Trump administration took a hands-off approach to AI, even trying to repeal state laws. Federal AI legislation remains out of reach. In 2023, a global governance framework seemed possible, but three years later, that door has closed.

An anonymous long-time AI governance researcher put it bluntly: “RSP is Anthropic’s most valuable brand asset. Dropping the training pause is like an organic food company quietly removing ‘organic’ from its label, then telling you their testing is now more transparent.”

Identity Crisis at a $380 Billion Valuation

In early February, Anthropic closed a $30 billion funding round at a $380 billion valuation, with Amazon as lead investor. Since inception, it’s achieved $14 billion in annualized revenue, and over the past three years, that figure has grown more than tenfold each year.

At the same time, the Pentagon threatens to blacklist the company. Musk publicly accuses it of data theft. Its core safety commitment is gone. Anthropic’s AI safety chief, Mrinank Sharma, resigned and wrote on X: “The world is in danger.”

Contradiction?

Maybe contradiction is Anthropic’s DNA.

Founded by former OpenAI executives concerned about OpenAI’s safety pace, Anthropic went on to build even more powerful models, faster, while telling the world how dangerous those models are.

The business model in a nutshell: we fear AI more than anyone, so you should pay us to build it.

This narrative worked perfectly in 2023–2024. AI safety was a buzzword in Washington, and Anthropic was the hottest lobbyist.

By 2026, the winds shifted.

“Woke AI” became a political insult, state-level AI regulation was blocked by the White House, and although California’s SB 53 (backed by Anthropic) became law, nothing happened at the federal level.

Anthropic’s safety narrative is shifting from “differentiator” to “political liability.”

Anthropic is performing a complex balancing act—needing to be “safe” enough to preserve its brand, but “flexible” enough to avoid rejection by the market or government. The problem: tolerance on both sides is shrinking.

How Much Is the Safety Narrative Worth Now?

Viewed together, the three events paint a clear picture.

Accusing Chinese companies of distilling Claude strengthens the lobbying case for chip export controls. Dropping the safety pause keeps Anthropic in the arms race. Refusing the Pentagon’s demand for autonomous weapons preserves its last layer of moral credibility.

Every move is logical, but each one contradicts the others.

You can’t claim Chinese companies “distilling” your model threatens national security while removing your own pledge to prevent your model from running out of control. If the model is that dangerous, you should be more cautious—not more aggressive.

Unless you’re Anthropic.

In the AI industry, your identity isn’t defined by your statements—it’s defined by your balance sheet. Anthropic’s “safety” narrative is, at its core, a brand premium.

Early in the AI arms race, this premium was valuable. Investors paid more for “responsible AI,” governments greenlit “trustworthy AI,” and clients paid for “safer AI.”

But by 2026, that premium is vanishing.

Anthropic now faces not a “should we compromise” question, but a “who do we compromise with first” dilemma. Compromise with the Pentagon, and the brand suffers. Compromise with competitors, and the safety pledge is voided. Compromise with investors, and both sides must concede.

At 5:01 p.m. Friday, Anthropic will give its answer.

Regardless of the outcome, one thing is certain: the Anthropic that once thrived on “we’re different from OpenAI” is becoming just like everyone else.

The end of an identity crisis is often the disappearance of identity itself.

Statement:

- This article is reprinted from [TechFlow]. Copyright belongs to the original author [Ada]. If you have concerns about this reprint, please contact the Gate Learn team, and we will handle it promptly according to relevant procedures.

- Disclaimer: The views and opinions expressed in this article are solely those of the author and do not constitute investment advice.

- Other language versions of this article are translated by the Gate Learn team. Without mentioning Gate, translated articles may not be copied, distributed, or plagiarized.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?