OpenGradient vs Bittensor: Key Differences in Mechanisms and Incentives Across Decentralized AI Networks

As decentralized AI continues to evolve, projects are taking different approaches to two fundamental challenges: ensuring trustworthy computation and improving model performance. When choosing infrastructure, developers often need to balance inference efficiency, training capability, and incentive design. This makes comparing OpenGradient and Bittensor especially relevant.

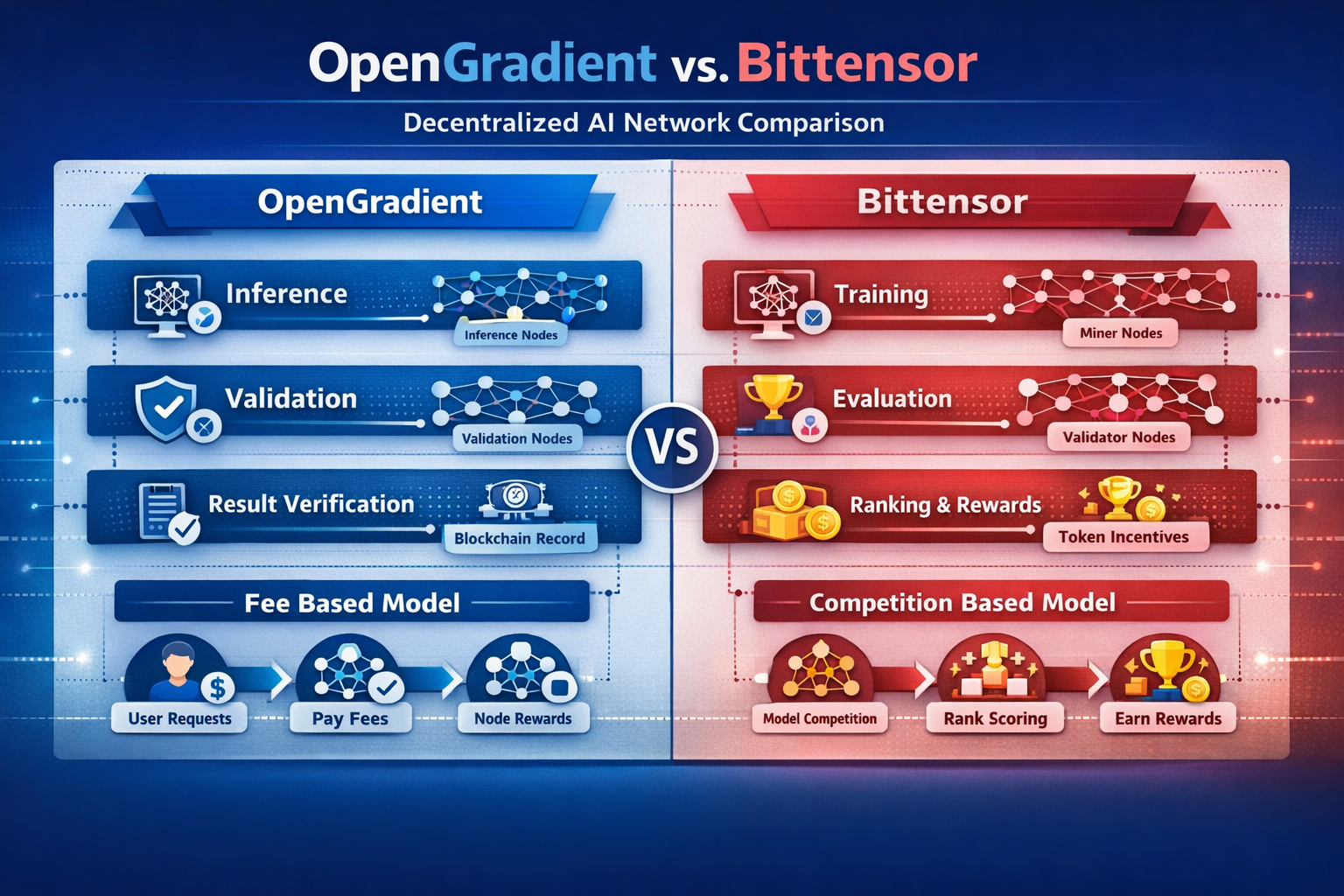

These differences generally fall into three areas: architecture design, computation model, and economic incentives. Together, they define each network’s role and practical use cases.

What Is OpenGradient

OpenGradient can be understood as a decentralized computing network focused on AI inference execution and result verification.

At the mechanism level, the OpenGradient system assigns user requests to inference nodes for execution, while verification nodes independently validate the outputs. This structure emphasizes verifiable results rather than simply improving model performance.

Structurally, the network consists of inference nodes, verification nodes, and a data layer. Execution and validation are separated, forming a layered computing system.

The significance of this design is that AI inference can run without requiring trust in a single executor, making it suitable for scenarios where accuracy and reliability are critical.

What Is Bittensor

Bittensor is better understood as a decentralized network centered on model training and performance competition.

At the mechanism level, nodes submit model outputs and compete based on quality. The network allocates rewards according to performance, creating a market-driven training environment. Nodes continuously improve their models to earn more rewards.

Structurally, the network includes miner nodes and validator nodes. Validators evaluate output quality and determine reward distribution.

This model encourages continuous model improvement through economic incentives, enabling the network to evolve over time.

Differences in Network Architecture Design

The architectural contrast reflects different priorities.

At the mechanism level, OpenGradient uses a layered structure that separates inference from verification. Bittensor, by contrast, uses a competitive structure where optimization emerges from node performance comparisons.

From a structural perspective, OpenGradient emphasizes modular layers such as access, execution, and verification. Bittensor focuses more on internal scoring and incentive systems.

| Dimension | OpenGradient | Bittensor |

|---|---|---|

| Architecture Type | Layered structure | Competitive network |

| Core Components | Inference + verification | Training + evaluation |

| Node Relationship | Collaborative | Competitive |

| Scaling Method | Modular expansion | Competition-driven growth |

| Primary Goal | Result reliability | Model optimization |

This distinction shows that OpenGradient is optimized for trustworthy computation, while Bittensor is optimized for improving model performance.

Inference vs Training: Core Mechanism Differences

The most fundamental difference lies in how computation is handled.

At the mechanism level, OpenGradient focuses on inference. It runs existing models on input data and produces verifiable outputs. Bittensor focuses on training, where models are continuously refined through competition.

Structurally, OpenGradient follows a defined pipeline: request routing, inference execution, and result verification. Bittensor operates as an ongoing iterative system where models evolve over time.

This difference means OpenGradient is better suited for real-time inference, while Bittensor is designed for long-term model development.

How Incentive Models Differ

Incentive design directly shapes node behavior.

At the mechanism level, OpenGradient rewards nodes for performing inference and verification tasks, with incentives driven by user demand. Bittensor distributes rewards internally based on the quality of model outputs.

From a structural perspective, OpenGradient forms a usage-driven economy, while Bittensor operates as a competition-driven economy.

This means OpenGradient revenue is tied to actual compute demand, whereas Bittensor rewards depend more on internal evaluation systems.

Data and Model Control

Control over models and data affects how open each network is.

At the mechanism level, in OpenGradient, models are typically provided by users or developers, while nodes focus on execution and verification. In Bittensor, nodes develop and maintain their own models.

Structurally, OpenGradient functions more like a computing platform, while Bittensor resembles a marketplace for models.

This difference highlights that OpenGradient prioritizes compute services, while Bittensor emphasizes the competitive value of models themselves.

Differences in Use Cases and Ecosystem Direction

Use cases reflect underlying design choices.

At the mechanism level, OpenGradient is suited for scenarios requiring real-time inference and verifiable outputs, such as automated decision-making and data analysis. Bittensor is better suited for training-focused environments where improving AI capabilities is the primary goal.

Structurally, OpenGradient’s ecosystem is built around developers and applications, while Bittensor’s ecosystem revolves around model competition and node participation.

These differences show that the two are not direct substitutes, but rather complementary approaches within the broader AI infrastructure landscape.

Conclusion

OpenGradient and Bittensor represent two distinct paths in decentralized AI. OpenGradient focuses on inference and verification, prioritizing trustworthy results. Bittensor focuses on training and competition, prioritizing continuous model improvement.

FAQ

What is the main difference between OpenGradient and Bittensor?

OpenGradient focuses on inference and verification, while Bittensor focuses on model training and competition.

Why does OpenGradient emphasize verification?

To ensure outputs are trustworthy and not dependent on a single node.

How does Bittensor’s incentive model work?

Nodes compete by producing high-quality outputs and earn rewards based on performance.

Are they used for the same scenarios?

Not entirely. OpenGradient is better for inference applications, while Bittensor is better for training and optimization.

Which is better for developers?

It depends on the use case. OpenGradient suits real-time inference needs, while Bittensor is more suitable for model development and optimization.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?