Where Is Humanity Headed in the AI Era? Structural Reflections Beyond “The 2028 Global Intelligence Crisis”

Debate around “The 2028 Global Intelligence Crisis” report often centers on a single question: Will AI cause a systemic collapse of the global economy in 2028?

This question is inherently dramatic. However, focusing solely on the binary of “collapse or not” risks overlooking more important structural variables. The real issue isn’t the macro outcome of a specific year, but how humanity’s role in the economic system will evolve as AI becomes the dominant productivity tool.

I. The Essence of AI Disruption: Redistribution of the Production Function

From an economics perspective, technological revolutions fundamentally shift the weighting of factors within the production function.

- In the industrial era, capital amplified physical labor.

- In the information era, technology enhanced information processing efficiency.

- In the AI era, capital—computing power, data, and models—begins to amplify and even replace cognitive labor.

The critical change isn’t just improved efficiency, but “who holds a larger share in value creation.”

If cognitive tasks—analysis, modeling, content generation, coding, process decision-making—are increasingly performed by AI, labor income may decline as a share of total output, while capital returns rise. This will directly impact income structure, social mobility, and consumption capacity. Therefore, the disruption from AI is more akin to a redistribution adjustment than a simple technological upgrade.

II. Why Is the Probability of “Systemic Collapse” Limited?

Systemic financial crises typically require a broken credit chain, severe asset-liability mismatches, and excessive leverage. Historically, major crises have resulted from internal structural imbalances within the financial system—not from productivity tools themselves.

AI is a productivity-enhancing technology shock. It may alter profit structures and employment patterns, but it doesn’t inherently undermine bank asset quality or the functioning of the credit system.

Furthermore, technological diffusion faces real-world friction:

- Enterprise IT architecture reconstruction takes time

- Data governance and compliance systems present barriers

- Organizational process and job division adjustments are slow to change

Even as AI models rapidly improve, full-scale replacement depends on organizational transformation. This “institutional and organizational friction” creates a buffer.

In the short term, we’re more likely to see industry differentiation and profit revaluation than a sudden failure of the global credit system.

III. The Real Risk: Structural Mismatches

Structural mismatches pose a more realistic risk than outright collapse.

The first mismatch stems from skill structure. Much of the current workforce was trained in an environment where “human cognition was scarce.” If standardized analysis and generative tasks are automated, these skills will need to be repriced.

The second mismatch comes from income structure. If productivity gains from AI concentrate among owners of computing power and technology platforms, while labor bargaining power declines, consumer demand may be pressured.

The third mismatch arises from expectation management. Capital markets often price in anticipated growth for the next decade. When actual earnings fall short of expectations, valuation corrections amplify volatility.

These risks may combine to create periodic turbulence. However, turbulence and collapse are fundamentally different concepts.

IV. How Will Employment Structures Change?

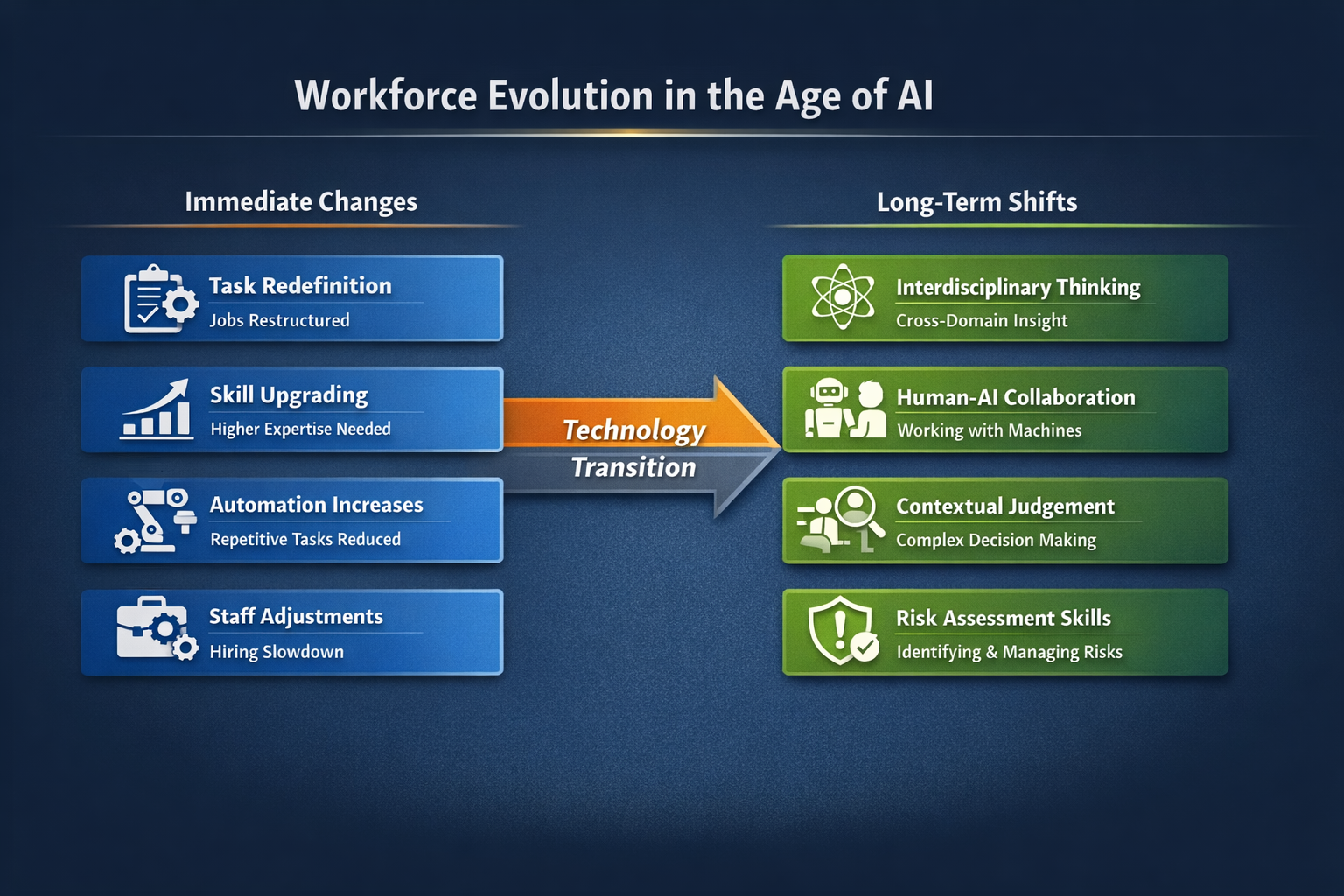

Technological substitution usually follows a “task replacement” path, rather than causing entire jobs to disappear.

A job typically consists of multiple tasks, some of which can be automated while others require human judgment and coordination. The more likely outcomes are:

- Job content changes

- Skill requirements upgrade

- Fewer repetitive tasks

- More integrated decision-making tasks

In the short term, companies may adjust their workforce through reduced hiring, position consolidation, and natural attrition, rather than large-scale one-off replacement. The long-term trend is clear: the value of standardized cognitive work will decline, while the value of complex judgment and system integration skills will rise.

This means education and training systems must shift toward:

- Interdisciplinary understanding

- Human–machine collaboration

- Situational judgment

- Risk identification

rather than simple memorization and formula calculation.

V. Will AI Change Social Power Structures?

If computing power and data become core production assets, those who own infrastructure and algorithmic resources will gain greater bargaining power.

This could lead to two outcomes:

- Scale effects become further intensified

- Regulation and institutional innovation accelerate

Historical experience shows that when technological concentration increases, institutions tend to adjust accordingly. Antitrust, tax reform, and industry standards may all become topics of future debate.

In short, technological expansion and institutional restructuring typically evolve in tandem.

VI. The Core of Human Value in the AI Era

As machines far surpass humans in speed and precision, human value won’t disappear—it will shift to higher-level domains.

These may include:

- Value orientation and judgment

- Institutional design and oversight

- Risk bearing

- Creative integration

- Building social trust

AI can provide computational results, but “which path to take” remains a decision at the institutional and power level. This means human roles may shift from executors to participants in decision-making and authorization.

VII. More Likely Realistic Scenarios

Based on the laws of technological diffusion and macro mechanisms, the more probable scenarios include:

- AI deeply penetrates multiple industries, but at uneven rates

- Tech company profit margins rise periodically

- Mid-skilled positions are compressed, while demand for high-end positions increases

- Widening income gaps become a focus of policy debate

- Market valuation volatility intensifies

- Capital concentrates on computing power, energy, and infrastructure

These changes are more like a structural reshuffling than an economic collapse. If a crisis occurs, it’s more likely to stem from asset bubbles and excessive leverage than from AI itself.

VIII. Core Challenges During the Transition Period

The true test of the AI era lies in how the transition period is managed.

During this stage:

- Some skills depreciate rapidly

- Retraining is limited in speed

- Income disparities widen

- Market expectations are repeatedly revised

Policy and institutions must strike a balance between efficiency and stability.

No matter the approach, a sustainable long-term path depends on genuine productivity gains and demand matching—not permanently distorted incentives.

Conclusion: The Issue Is Not “Destruction,” but “Reconstruction”

“The 2028 Global Intelligence Crisis” presents a high-impact scenario that helps us consider extreme risks. From macro and historical perspectives, AI is more likely to drive long-term structural transformation than short-term systemic destruction.

The real question isn’t: Will AI destroy the economy?

It’s: When cognitive ability is no longer scarce, how will humanity redefine value, distribution, and power structures?

Technology itself is neutral. The future depends on institutional choices, education strategies, and capital allocation. The AI era is not an endpoint—it’s the beginning of a new order.

Related Articles

Arweave: Capturing Market Opportunity with AO Computer

The Upcoming AO Token: Potentially the Ultimate Solution for On-Chain AI Agents

What is AIXBT by Virtuals? All You Need to Know About AIXBT

AI Agents in DeFi: Redefining Crypto as We Know It

Understanding Sentient AGI: The Community-built Open AGI